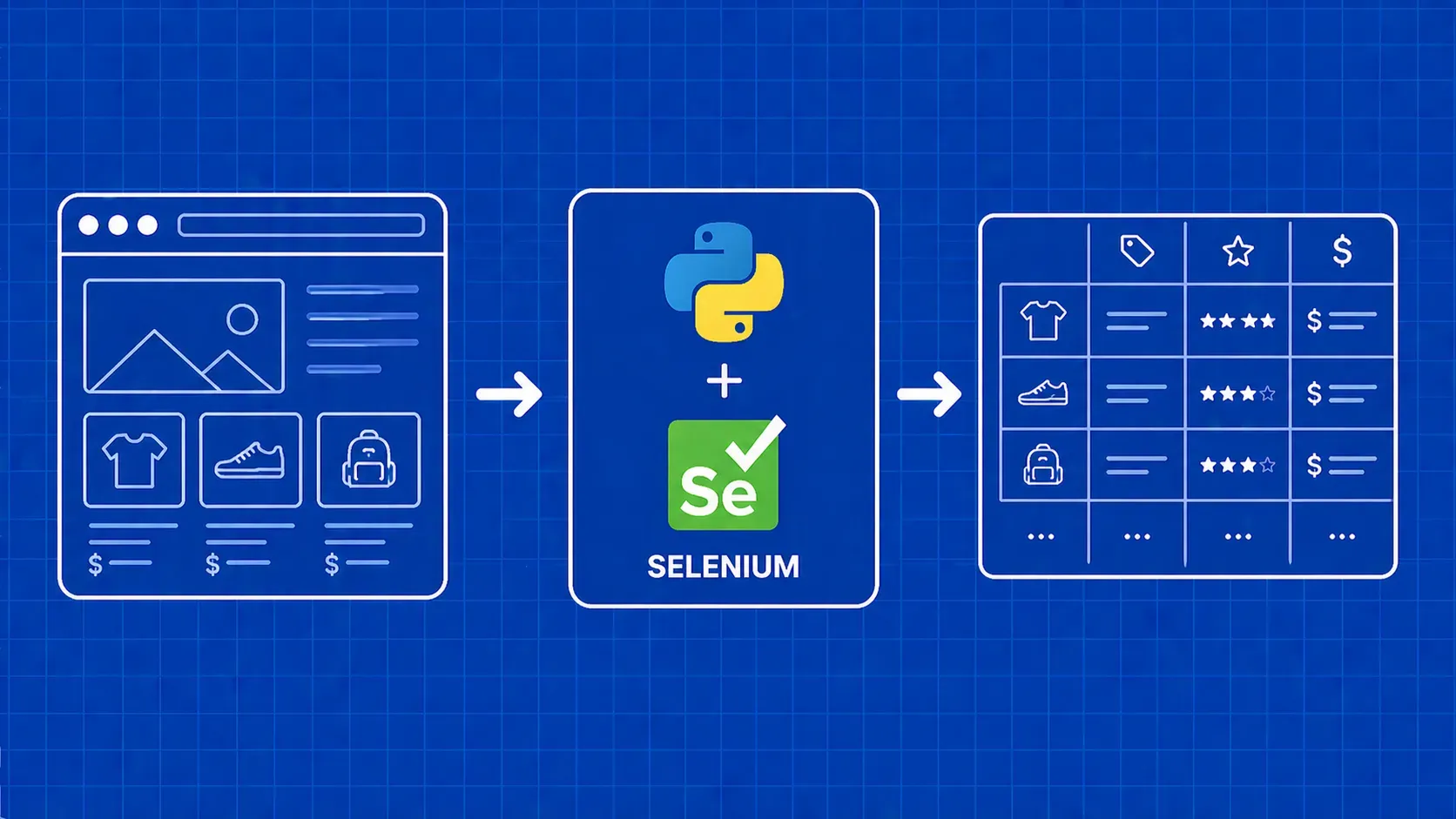

TL;DR: Selenium lets you scrape JavaScript-heavy websites by driving a real browser from Python code. This tutorial walks you through every phase: installing Selenium, configuring Chrome, locating and interacting with elements, handling waits and pagination, exporting clean data, and scaling your scraper with proxies, Selenium Grid, and API-based alternatives.

Selenium is a browser automation framework that controls a real browser instance (Chrome, Firefox, Edge, and others) through code. While it was originally built for testing web applications, it has become one of the most widely used tools for web scraping with Selenium, especially on sites where JavaScript renders the content you need.

If you have tried scraping a single-page application or an infinite-scroll feed with requests and BeautifulSoup, you already know the problem: the HTML you download is an empty shell. The actual data loads after JavaScript runs, and a plain HTTP client never executes that JavaScript. Selenium solves this by launching a full browser, loading the page exactly the way a human visitor would, and then giving you programmatic access to the resulting DOM.

This tutorial covers every practical step of selenium web scraping in Python: environment setup, element location strategies, waiting for dynamic content, scrolling, pagination, data export, proxy integration, and performance tuning. By the end, you will have a working end-to-end scraper and a clear picture of when Selenium is the right choice versus lighter alternatives.