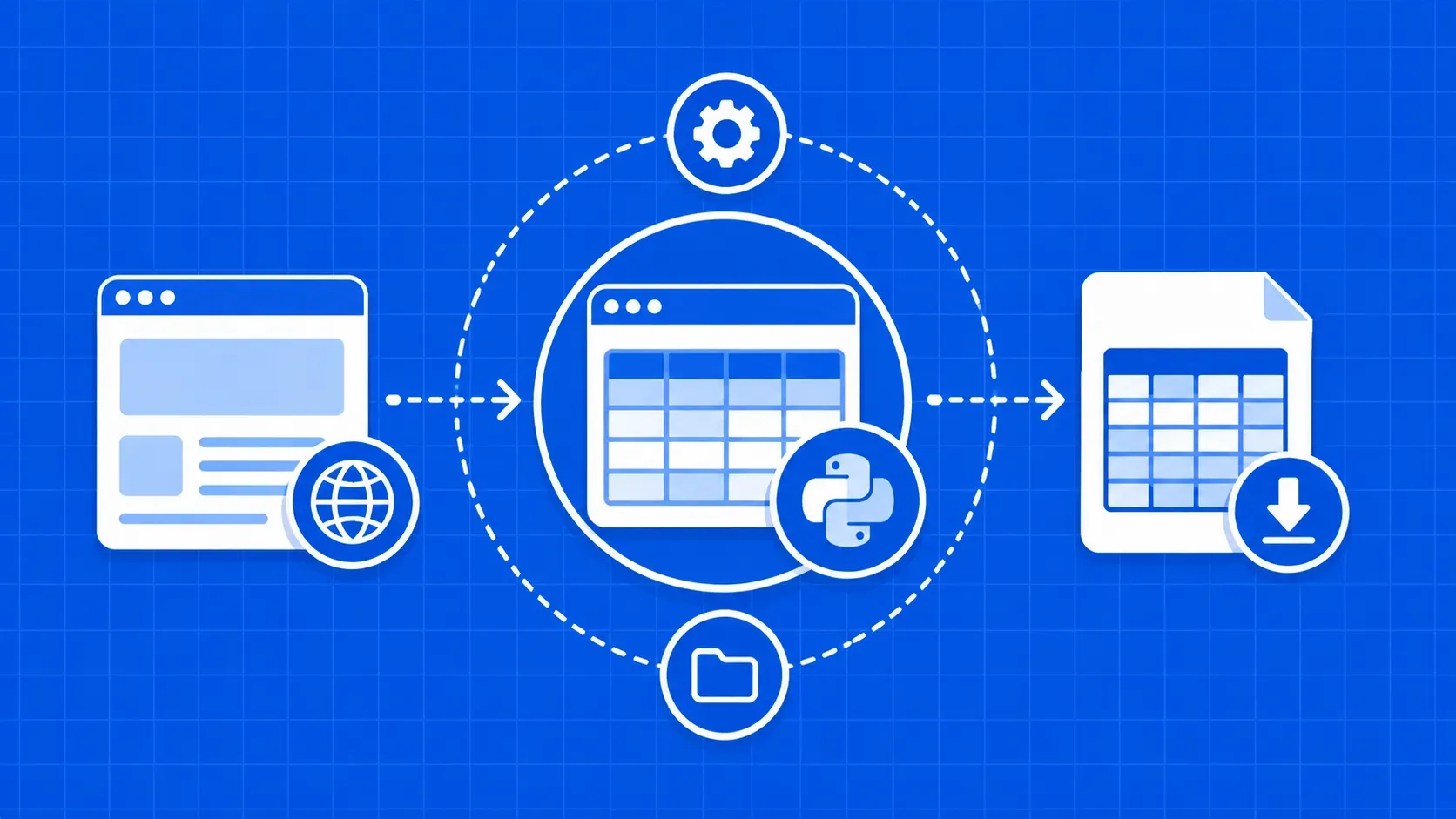

TL;DR: Most HTML tables can be scraped with a single line of pandas.read_html. When the table is paginated, JavaScript-rendered, or has merged headers, switch to Requests + BeautifulSoup or a headless browser like Playwright. This guide gives you a decision matrix, working code for all three approaches, and the cleaning steps that turn scraped rows into pipeline-ready data.Tabular data is everywhere on the public web, from Wikipedia infoboxes and stock screeners to government statistics, sports stats, and product comparison pages. If you know how to scrape HTML tables using Python, you can turn those rows into clean DataFrames, JSON documents, or rows in your own database in minutes.

The catch is that HTML table is a deceptively wide category. Some tables sit cleanly inside <table> markup that pandas can parse with one line. Others are hand-rolled grids of <div>s, paginated across dozens of pages, or only populated after JavaScript runs in the browser. A method that works perfectly on Wikipedia might silently return zero rows on a single-page app.

This guide walks through three Python approaches and frames the entire article around two practical questions: which method should you reach for, and how do you keep your scraper running when the site changes its markup next quarter?