TL;DR: Amazon product pages are packed with valuable data (prices, ratings, reviews, ASINs), but extracting it reliably requires more than a basic HTTP request. This guide walks you through building a Python scraper with Requests and BeautifulSoup, handling pagination and anti-bot defenses, exporting to CSV or JSON, and feeding the results into LLM workflows. You will also learn when to use a scraping API instead of rolling your own solution.

If you need to scrape Amazon product data at any meaningful scale, you already know the platform does not make it easy. Amazon is the world's largest e-commerce marketplace, reportedly generating north of $500 billion in annual net sales revenue. That makes its product catalog one of the most valuable (and most heavily guarded) datasets on the public web.

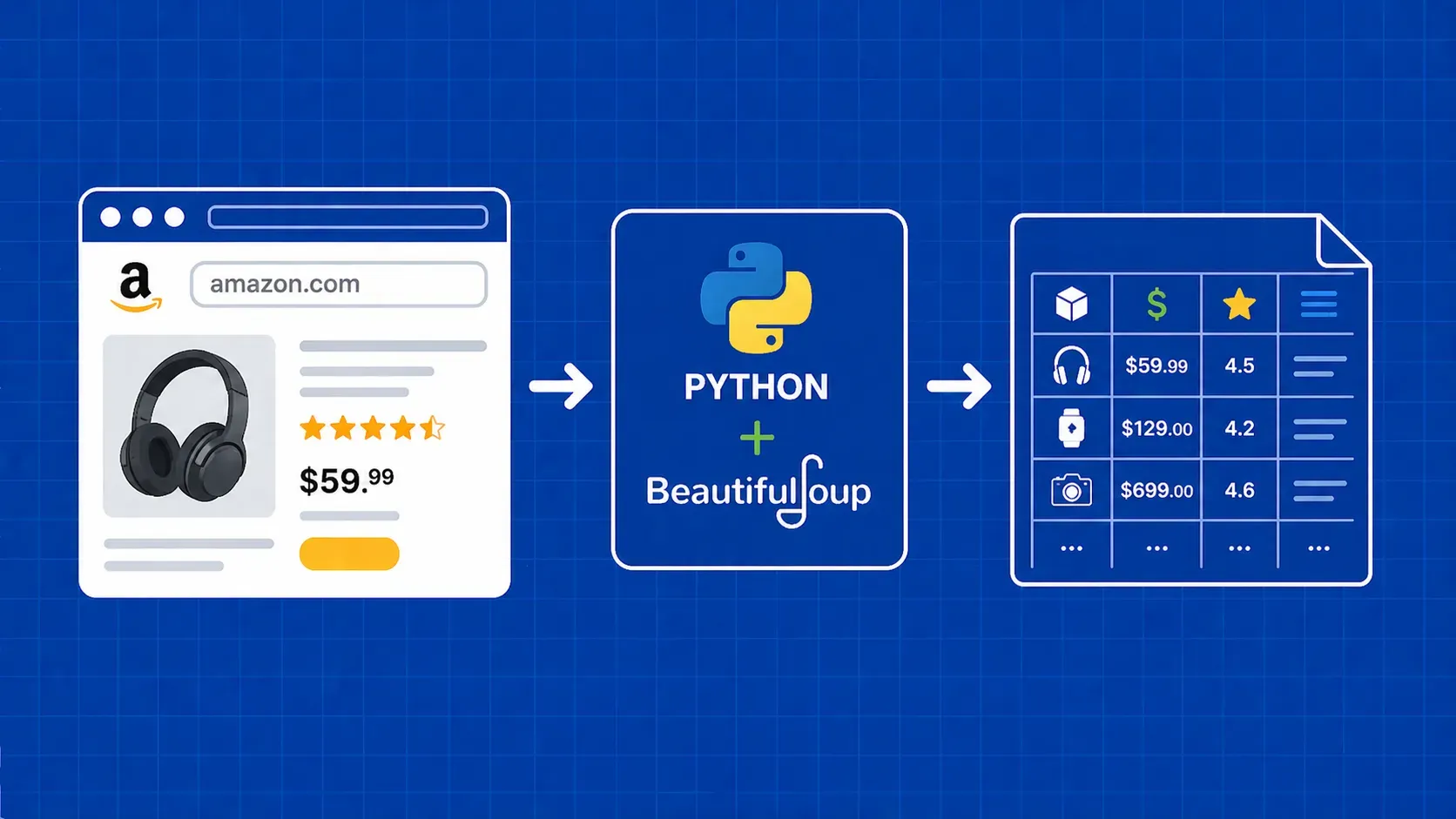

Web scraping Amazon products means programmatically extracting structured information, such as titles, prices, ratings, images, and ASINs, from Amazon's HTML pages. Whether you are building a price monitoring dashboard, running competitive market research, or assembling training data for a machine learning model, the workflow starts with the same fundamentals: send an HTTP request, parse the response, and pull the fields you care about.

The challenge is that Amazon actively blocks automated traffic. CAPTCHAs, IP bans, dynamic HTML, and AWS WAF all stand between you and clean data. This guide covers the full pipeline: environment setup, page structure, a working Python scraper with BeautifulSoup, pagination, anti-bot handling, data export, and even how to pipe your scraped results into an LLM. We will also compare DIY scraping against API and no-code alternatives so you can pick the approach that fits your project.