TL;DR: Puppeteer gives you full control of a headless Chrome instance from Node.js, making it the go-to tool for scraping JavaScript-rendered pages. This guide walks you through installation, selector-based extraction, infinite scroll, form login, request interception, stealth plugins, structured data export, and Docker deployment, so you can move from a toy script to a production-grade scraper.

Web scraping is the practice of programmatically extracting data from websites, and when those sites rely on client-side JavaScript to render their content, a simple HTTP request will not cut it. You need a real browser, or at least something that acts like one. That is exactly the problem Puppeteer was built to solve.

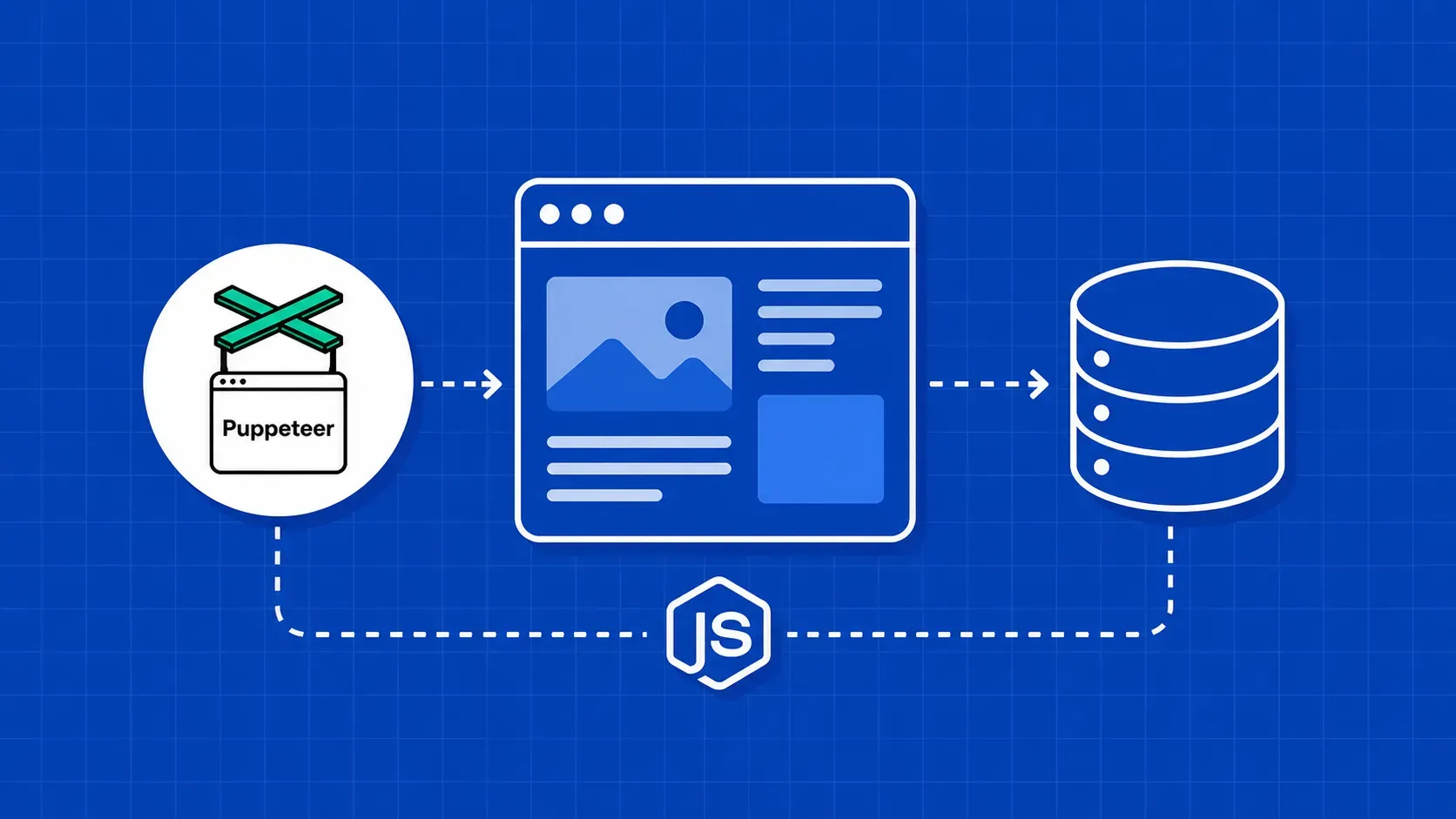

Puppeteer is a Node.js library that lets you web scrape with Puppeteer and NodeJS by driving a headless (or headful) Chrome instance through the Chrome DevTools Protocol. It can click buttons, fill forms, scroll pages, and evaluate arbitrary JavaScript in the page context, then hand the results back to your script. For developers already comfortable with JavaScript, it is one of the most natural paths into headless browser scraping javascript workflows.

In this tutorial, you will learn how to set up a Puppeteer project from scratch, extract data from static and dynamic pages, handle pagination and infinite scroll, intercept hidden API calls, avoid bot detection, export your results to JSON and CSV, and deploy the whole thing inside a Docker container. Every code example targets Node.js 18 or later, and we reference the Puppeteer v24 API surface throughout. Whether you are building a price tracker, a lead-generation pipeline, or an academic research tool, the patterns in this guide will get you to production faster.