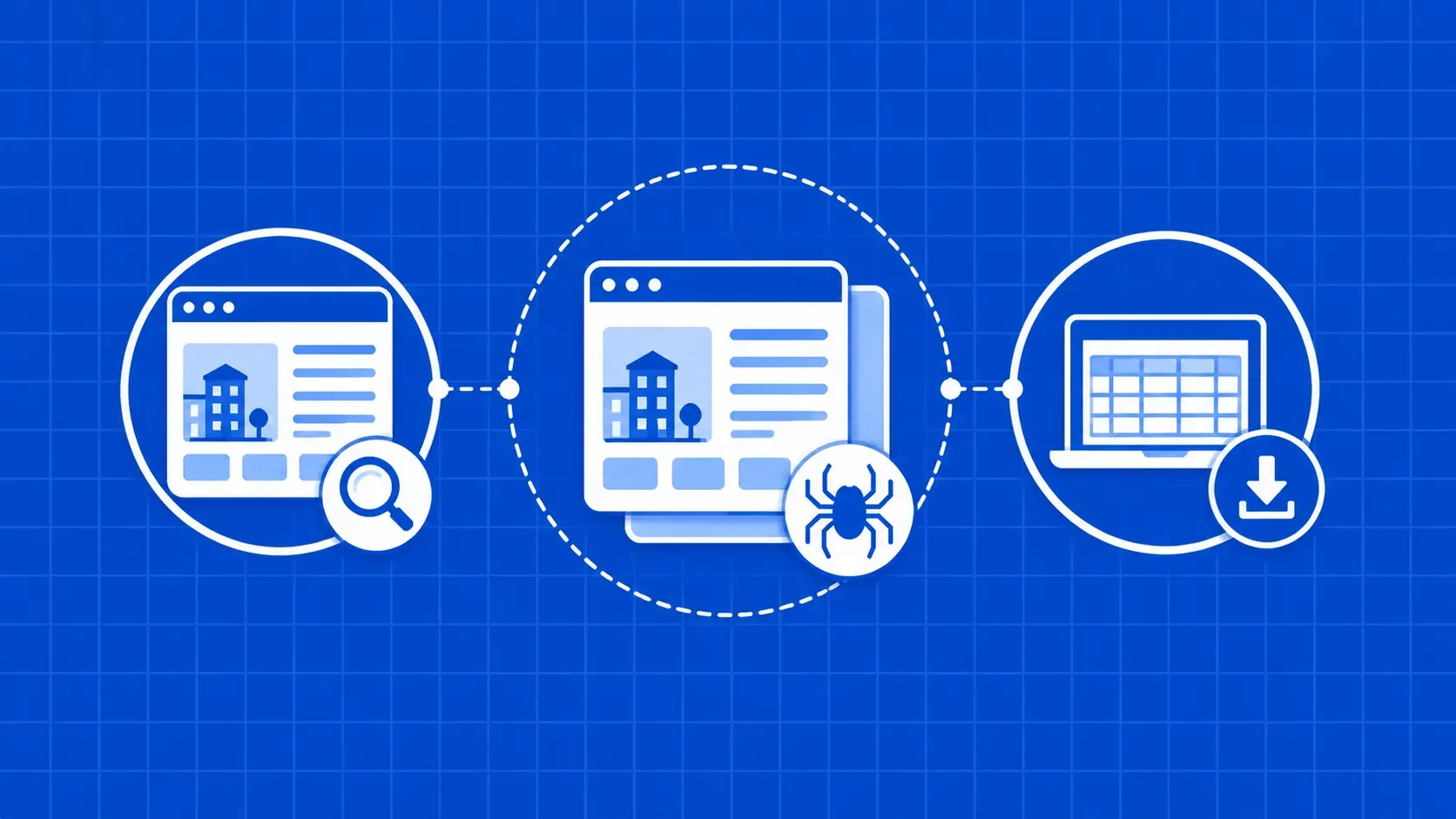

TL;DR: Idealista is the largest property marketplace in Spain, Italy, and Portugal, but it sits behind a serious anti-bot stack that blocks naive scrapers fast. This guide walks you through how to scrape data from Idealista end-to-end in Python, covering site mapping, Selenium with undetected-chromedriver, DataDome handling, proxy rotation, and clean exports, with production hardening competitors usually skip.

Introduction

If you've spent any time trying to figure out how to scrape data from Idealista, you already know the playbook is short and brutal: send a few clean requests, get blocked, swap user-agents, get blocked again, see a captcha, and start over. Idealista is the dominant real estate portal across Spain, Portugal, and Italy, with millions of for-sale and rental listings, which makes it a goldmine for market analysts, buyer agents, and proptech teams. It is also one of the most aggressively defended sites in the category.

This guide is written for intermediate Python developers who want a battle-tested recipe, not a code fragment. You will learn the legal and technical context first, then walk through a concrete Selenium workflow with undetected-chromedriver, then layer in proxies, captcha handling, deduplication, and incremental tracking, with the production realities most tutorials skip.

Why scrape Idealista, and what data is actually worth extracting

Idealista is one of the largest real estate marketplaces in Southern Europe, with millions of for-sale and for-rent listings across the Spanish, Italian, and Portuguese sites. For investor research, comp pricing models, and lead enrichment for agencies, that footprint is hard to replicate from public APIs alone, which is why so many teams ask how to scrape data from Idealista in the first place.

When you plan a scrape, decide upfront which fields you actually need. The listing card and detail pages typically expose:

- Title and listing URL (the canonical

/inmueble/<id>/slug) - Price and currency, plus price-per-square-meter on detail pages

- Surface area in m², number of rooms and bathrooms

- Description text, listing date, and updated date

- Agent or owner type (private vs. agency), with phone and contact buttons

- Photos, floor plans, and sometimes virtual-tour links

- Approximate coordinates and neighborhood metadata

The Italian (idealista.it) and Portuguese (idealista.pt) sites tend to mirror the Spanish layout, so a single scraper design generally adapts across all three with minor selector tweaks. We will revisit those differences in the troubleshooting section.

Is scraping Idealista legal? Compliance ground rules

Public listing data is generally considered fair game when collected at respectful rates, but Idealista's terms of service and the EU's GDPR change the math the moment you touch personal data. Agent names, phone numbers, and email addresses are personal data under the GDPR, so storing them at scale typically requires a documented lawful basis, retention limits, and a data-deletion path.

Practical guardrails: respect robots.txt, throttle aggressively, avoid logged-in areas, and strip personal contact details from any dataset you redistribute. Treat this section as orientation rather than legal advice, and run anything sensitive past your counsel before production. The legal posture here is genuinely contested, so verify the current GDPR scope for your specific use case before deploying a scraper at scale.

How Idealista detects bots: DataDome, fingerprinting, and rate limits

Most public reporting attributes Idealista's interstitial captcha to DataDome, a commercial bot-mitigation service. Treat that attribution as community consensus rather than an official statement, since Idealista does not publish its detection stack.

Detection happens in layers, and understanding each one is the difference between a scraper that survives a week and one blocked in an hour:

- TLS and JA3 fingerprinting. Plain

requestsand many HTTP libraries produce a TLS handshake signature trivially distinguishable from a real Chrome session. Even with a perfect User-Agent, the cipher order gives you away. - Header coherence. Bot detection engines check that

Accept,Accept-Language,sec-ch-ua, andUser-Agentagree with each other. A Chrome 120 UA paired with a missingsec-ch-ua-platformheader is a tell. - JavaScript challenges. DataDome serves a small JS payload that probes

navigator.webdriver, canvas hashes, WebGL renderers, and timing signals. Headless Chrome and stock Selenium fail several by default. - Behavioral and IP signals. Velocity, mouse-free navigation, datacenter ASN ranges, and reused cookies all feed into a risk score that triggers the captcha.

Your bot needs a real-browser fingerprint, plausible headers, residential or mobile IPs, and human-like pacing. No single fix is enough.

Choosing a scraping stack: requests/HTTPX vs Selenium vs a scraping API

There is no universally correct answer to how to scrape data from Idealista at scale; the right choice depends on volume, budget, and how much JavaScript you can stomach rendering. Here is a quick decision matrix you can paste into a planning doc.

|

Approach |

Best for |

Speed |

DataDome resistance |

Maintenance |

|---|---|---|---|---|

|

|

Tiny one-off pulls |

Fast |

Low |

Low until blocked |

|

|

Medium scrape, async-friendly |

Very fast |

Low to medium |

Moderate |

|

Selenium + undetected-chromedriver |

Reliable per-page extraction |

Slow |

Medium |

High (driver drift) |

|

Managed scraping API |

Production scale, hands-off |

Variable |

High |

Low |

Pure HTTP stacks are the cheapest path when they work, but they fold the moment Idealista escalates to a JS challenge. Selenium with undetected-chromedriver gives you a real browser fingerprint and DOM execution, at the cost of being significantly slower and more memory-hungry. A managed API hides the proxy and challenge-solving layer entirely, which is the right call once you outgrow a single machine. Most production teams end up combining these: a fast HTTP path for cheap pages, a browser fallback for hardened ones, and a scraping API as the safety net.

Setting up your Python project and dependencies

You will want Python 3.10 or newer, a clean virtualenv, and pinned dependencies. Idealista's frontend changes often, so locking versions makes regressions easier to diagnose later.

python -m venv .venv

source .venv/bin/activate

pip install selenium==4.* undetected-chromedriver selenium-wire httpx parsel tenacity python-dotenvA practical layout:

idealista-scraper/

├── .env # proxy credentials, API keys

├── config.py # constants, base URLs

├── scraper/

│ ├── driver.py # uc.Chrome factory + waits

│ ├── crawl.py # provinces → municipalities → listings

│ └── parse.py # XPath/CSS extractors

├── storage.py # JSON/CSV/SQLite writers

└── main.pyPin the Chrome major version against your installed undetected-chromedriver build; mismatches are the most common cause of silent crashes.

Mapping Idealista's URL structure: homepage, provinces, municipalities, search, property

Before you write a single selector, walk the site by hand. Idealista's URL scheme is fairly regular once you see the pattern, and a clear mental map prevents you from re-crawling the same nodes.

The hierarchy looks roughly like this:

- Homepage:

https://www.idealista.com/(and.it,.ptfor the Italian and Portuguese versions) - Province directory: linked from the bottom of the homepage

- Municipality directory: under each province, a page listing municipalities

- Listing pages:

/venta-viviendas/<municipality>/for sale,/alquiler-viviendas/<municipality>/for rentals (rental and IT/PT URL patterns tend to mirror this, but verify against a live page first) - Pagination:

pagina-2.htm,pagina-3.htm, appended to the listing URL - Property detail:

/inmueble/<id>/, the canonical unique listing identifier - Recency sort: append

?ordenado-por=fecha-publicacion-descto surface newly listed properties first

Internalize that inmueble/<id> slug; the rest of the article uses it as the dedup key and change-tracking pivot. A solid CSS selector reference is invaluable here when selectors shift.

How to scrape data from Idealista with Selenium and undetected-chromedriver

With the URL map in hand, you can stand up the actual scraper. The plan: launch a patched Chrome via undetected-chromedriver, navigate to the homepage, and use explicit waits to confirm the DOM rendered before you query it. The next four steps build the crawler in a top-down sweep, from provinces to municipalities to listing cards to pagination.

Step 1: Extract the full list of provinces from the homepage

The Spanish homepage exposes a province directory near the footer. We collect each anchor inside that block, store the visible name, and keep the absolute URL for the next step.

import undetected_chromedriver as uc

from selenium.webdriver.common.by import By

from selenium.webdriver.support.ui import WebDriverWait

from selenium.webdriver.support import expected_conditions as EC

def make_driver():

opts = uc.ChromeOptions()

opts.add_argument("--window-size=1366,900")

# use_subprocess=True is currently required to avoid a destructor

# bug in some undetected-chromedriver builds; verify against the

# upstream repo before pinning, since package internals change often.

return uc.Chrome(options=opts, use_subprocess=True)

def fetch_provinces(driver):

driver.get("https://www.idealista.com/")

WebDriverWait(driver, 20).until(

EC.presence_of_element_located((By.CSS_SELECTOR, "div.locations-list"))

)

anchors = driver.find_elements(

By.XPATH, "//div[contains(@class,'locations-list')]//a"

)

return {a.text.strip(): a.get_attribute("href") for a in anchors if a.text.strip()}The first call will almost certainly hit a DataDome captcha, so wrap it with a manual-solve hook (covered later) on first run. Once your session has a clean cookie, the rest of the crawl usually flows.

Step 2: Crawl each province for its municipalities

Each province page exposes its municipalities through a location_list block. We loop the dictionary from Step 1 and attach each municipality's name and URL underneath its parent province.

def fetch_municipalities(driver, provinces):

out = {}

for name, url in provinces.items():

driver.get(url)

WebDriverWait(driver, 15).until(

EC.presence_of_element_located((By.ID, "location_list"))

)

anchors = driver.find_elements(By.XPATH, "//ul[@id='location_list']//a")

out[name] = {

"url": url,

"municipalities": [

{"name": a.text.strip(), "url": a.get_attribute("href")}

for a in anchors if a.text.strip()

],

}

time.sleep(random.uniform(2.5, 6.0)) # polite pacing

return outTwo things worth flagging: randomized sleeps mimic human browsing more convincingly than fixed delays, and you should checkpoint this nested dict to disk after each province so a crash on province number 30 does not cost you the previous 29.

Step 3: Parse property cards (title, price, area, description, URL)

Listing pages render an article.item node per property, with structured children for the canonical fields. The XPath patterns below use contains(@class, ...) instead of strict equality because Idealista occasionally appends modifier classes to its cards.

def parse_listing_page(driver):

cards = driver.find_elements(By.XPATH, "//article[contains(@class,'item')]")

rows = []

for c in cards:

try:

link = c.find_element(By.XPATH, ".//a[contains(@class,'item-link')]")

url = link.get_attribute("href")

title = link.text.strip()

price = c.find_element(

By.XPATH, ".//span[contains(@class,'item-price')]"

).text.strip()

details = [

d.text.strip() for d in c.find_elements(

By.XPATH, ".//span[contains(@class,'item-detail-char')]/span"

)

]

description = c.find_element(

By.XPATH, ".//div[contains(@class,'item-description')]"

).text.strip()

rows.append({

"id": url.rstrip("/").split("/")[-1],

"url": url, "title": title, "price": price,

"details": details, "description": description,

})

except Exception:

# Sponsored or malformed cards: skip rather than abort.

continue

return rowsTwo production notes. First, Idealista interleaves ad cards that look nearly identical to organic ones; the try/except around each card keeps one bad node from killing the page. Second, selectors drift; expect to refresh these XPaths every couple of months. Keep them in a single parse.py module so the diff is easy.

Step 4: Follow pagination via the 'Siguiente' link

Idealista uses a numeric pagination scheme with a Siguiente ("next") link wrapped in li.next. Some tutorials recurse on the next URL until it disappears, but unbounded recursion is how you trip rate limits and hit Python's recursion ceiling on dense municipalities. Bound the loop instead.

MAX_PAGES = 40

def crawl_municipality(driver, start_url, max_pages=MAX_PAGES):

rows, url, page = [], start_url, 0

while url and page < max_pages:

driver.get(url)

WebDriverWait(driver, 15).until(

EC.presence_of_element_located((By.XPATH, "//main"))

)

rows.extend(parse_listing_page(driver))

try:

next_link = driver.find_element(

By.XPATH, "//li[contains(@class,'next')]/a"

)

url = next_link.get_attribute("href")

except Exception:

url = None

page += 1

time.sleep(random.uniform(3.0, 7.0))

return rowsA 40-page cap roughly matches Idealista's own deep-paging cutoff and keeps each worker bounded. If you genuinely need more, split the search by price band or property type and run those subqueries in parallel rather than digging deeper into a single result set.

Solving DataDome captchas mid-session without losing state

Anyone learning how to scrape data from Idealista will eventually hit the DataDome interstitial. The good news, anecdotally confirmed across several scraper communities, is that once you solve the challenge in a session, it usually does not reappear for the rest of that browsing session. You can lean on that with a few concrete tactics.

The simplest is a manual-solve hook for development:

def open_with_captcha_pause(driver, url):

driver.get(url)

if "captcha" in driver.page_source.lower() or "datadome" in driver.page_source.lower():

input("Solve the captcha in the browser, then press Enter to continue...")For anything beyond a one-off, level up:

- Persistent profile. Pass

--user-data-dir=./chrome-profileso cookies and the DataDome_ddcookie survive runs. - Switch IP after a challenge. A captcha on a residential IP is recoverable; a captcha that recurs on the same IP usually means it is burned. Cycle to a new exit and retry.

- Slow down. Insert a longer randomized backoff (30 to 90 seconds) before retrying after a challenge, rather than hammering the same URL.

- Escalate to a managed solver. When throughput matters more than control, route the request through a scraping API that handles fingerprinting and challenges on its side.

Treat the captcha as a signal that your fingerprint, IP, or pacing crossed a threshold, not as a single popup to dismiss.

Scaling without getting blocked: proxies, headers, and rate control

The hard part of how to scrape data from Idealista at production volume is not parsing the HTML; it is staying ahead of the blocks. Once your prototype works, scale exposes new failure modes. Datacenter IPs that survived your first 200 requests get challenged in bulk, identical headers across every worker stand out, and tightly synchronized requests look like a coordinated bot.

Layer your defenses in this order, and only add complexity when the previous layer stops working.

1. Residential or mobile proxies, geo-targeted. Idealista's traffic is dominated by Spanish, Italian, and Portuguese consumers. Residential IPs from those countries blend in; a US datacenter ASN does not. With selenium-wire:

from seleniumwire.undetected_chromedriver import Chrome

seleniumwire_options = {

"proxy": {

"http": "http://user:pass@es.proxy.example:8000",

"https": "http://user:pass@es.proxy.example:8000",

"no_proxy": "localhost,127.0.0.1",

}

}

driver = Chrome(seleniumwire_options=seleniumwire_options, use_subprocess=True)2. Header rotation. Cycle a small pool of realistic Chrome 12x headers (matching User-Agent, sec-ch-ua, and Accept-Language). Keep them coherent; mismatched client hints are flagged faster than any single bad header.

3. Concurrency limits. Cap workers per IP at 1, per region at 4 to 8, and per crawl at whatever your proxy plan supports. Add jittered delays of 3 to 10 seconds between page fetches so your traffic does not pulse.

4. A managed scraping API as a safety net. Once you pass a few thousand pages a day, maintaining your own fingerprinting stack costs more than it saves. A managed Scraper API handles IP rotation, challenge solving, and retry logic behind a single endpoint, which lets you keep your parsing code and just swap the fetch layer. Treat it as a fallback path rather than the default.

Saving, deduping, and exporting the scraped property dataset

Printing rows to stdout is fine for a demo and useless in production. Stream writes to disk as you go so a crash on hour eight does not wipe hours one through seven.

import json, sqlite3

def upsert_sqlite(db_path, rows):

conn = sqlite3.connect(db_path)

conn.execute(

"CREATE TABLE IF NOT EXISTS listings ("

"id TEXT PRIMARY KEY, url TEXT, title TEXT, price TEXT, "

"details TEXT, description TEXT, scraped_at TEXT)"

)

conn.executemany(

"INSERT OR REPLACE INTO listings VALUES (?,?,?,?,?,?,datetime('now'))",

[(r["id"], r["url"], r["title"], r["price"],

json.dumps(r["details"]), r["description"]) for r in rows],

)

conn.commit(); conn.close()Use the inmueble/<id> slug as the primary key; titles and prices change, IDs do not. For lighter pipelines, append-only JSONL plus a daily dedup pass on the same key works fine.

Tracking newly listed Idealista properties on a schedule

Most teams asking how to scrape data from Idealista on a recurring basis care less about a one-shot bulk dump and more about what showed up in the last 24 hours. Buyer agents, investor funds, and lead-gen tools all want a fresh delta, not the full archive. Idealista exposes a recency sort with ?ordenado-por=fecha-publicacion-desc, which is the seed for a lightweight change-tracking workflow.

The pattern is simple: run the crawler against the recency-sorted URL for each watched municipality, diff the resulting IDs against your last run, and emit only the new rows.

def diff_new_listings(rows, seen_ids_path):

seen = set(pathlib.Path(seen_ids_path).read_text().splitlines()) if pathlib.Path(seen_ids_path).exists() else set()

new_rows = [r for r in rows if r["id"] not in seen]

pathlib.Path(seen_ids_path).write_text(

"\n".join(seen | {r["id"] for r in new_rows})

)

return new_rowsSchedule the job hourly via cron or Airflow, alert on new rows through Slack or email, and you have a working real-estate radar without a custom backend.

Troubleshooting: empty results, stale selectors, and ChromeDriver crashes

A few patterns account for most failures readers report.

use_subprocessquirk. Recentundetected-chromedriverreleases needuse_subprocess=Trueon some platforms to avoid a destructor warning that can corrupt the driver. Verify against the upstream repo, since the workaround changes across versions.NoSuchElementExceptionon cards. Usually Idealista swapped a class name. Re-inspect the DOM and prefercontains(@class, ...)over strict equality./en/layout differences. The English locale ships a slightly different DOM. Pin to the locale you actually want, and keep selectors per locale if you cross sites.- Chrome and driver mismatch. Pin both. A

SessionNotCreatedExceptionalmost always means the patched driver and your installed Chrome are off by a major version.

Key Takeaways

- Idealista's blocking stack combines DataDome challenges, TLS fingerprinting, and IP reputation, so a single tactic like rotating User-Agents will not hold up at scale.

- Use the

inmueble/<id>slug as your dedup key and primary identifier; titles and prices change, IDs do not. - Bound your pagination (around 40 pages per municipality) and split deeper queries by price band or property type rather than recursing forever.

- Layer your defenses in order: real-browser fingerprint, geo-targeted residential IPs, header rotation, polite pacing, then a managed scraping API as a fallback.

- Track new listings by sorting on

fecha-publicacion-descand diffing IDs against your last run; this is what turns a scraper into a useful product.

FAQ

Is scraping Idealista legal, and how does GDPR apply to agent contact details?

Public listing data is generally lawful to collect at respectful rates, but agent names, phones, and emails are personal data under the GDPR and require a documented lawful basis, retention limits, and a deletion path. Storing or redistributing agent contacts at scale without those controls is the riskiest part of any Idealista pipeline. Consult counsel for jurisdiction-specific guidance.

Does Idealista offer an official API for property data?

Idealista does not publish a general-purpose public listings API. Its developer program is oriented toward partner integrations and ad placement rather than open data access. For most analytics or research use cases, scraping the public site or buying a managed real-estate dataset are the realistic options. Check the current developer portal directly before assuming any specific endpoint is available.

Why does Idealista keep showing a DataDome captcha even when I use undetected-chromedriver?

undetected-chromedriver only fixes one signal, the navigator.webdriver flag and a handful of Chrome internals. DataDome also evaluates TLS fingerprints, header coherence, IP reputation, and behavioral pacing. If your IP is on a flagged datacenter range, or your headers are inconsistent, the challenge will keep firing. Add residential proxies and slower pacing first.

Should I use Selenium, HTTPX with parsel, or a managed scraping API for Idealista?

It depends on volume. For a few hundred pages, HTTPX with parsel is fastest. For reliable per-page extraction with JS rendering, Selenium with undetected-chromedriver is the proven middle ground. Above a few thousand pages a day, a managed scraping API removes the proxy and challenge-solving overhead, and is usually cheaper than maintaining your own fingerprinting stack.

Can the same scraper work on Idealista's Italian and Portuguese sites and on rental listings?

Largely, yes. The Italian (idealista.it) and Portuguese (idealista.pt) sites typically share the Spanish layout, and rentals follow an alquiler-viviendas pattern that mirrors venta-viviendas. Verify selectors against live pages, since Idealista occasionally A/B tests locale-specific tweaks around card layouts and pagination.

Conclusion

Figuring out how to scrape data from Idealista is mostly an exercise in respecting the layered defenses the site has built up over the years. Get the URL map right, use a real browser fingerprint with undetected-chromedriver, bound your pagination, dedupe on the inmueble/<id> slug, and stream writes to disk so a crash never costs you a full run. Treat DataDome challenges as a signal that your IP, fingerprint, or pacing crossed a line, not as a popup to click through. Once you cross from prototype into production scale, the layered playbook (residential proxies, geo-targeting, header rotation, retry logic) starts paying for itself almost immediately.

If you would rather skip the proxy plumbing and challenge solving entirely, WebScrapingAPI's Scraper API handles the fetch layer behind a single endpoint, returning HTML you can drop straight into the parsers above. Either way, the workflow here gives you a clean path from a single-machine prototype to a scheduled pipeline that tracks new Spanish, Italian, and Portuguese listings on a useful cadence.