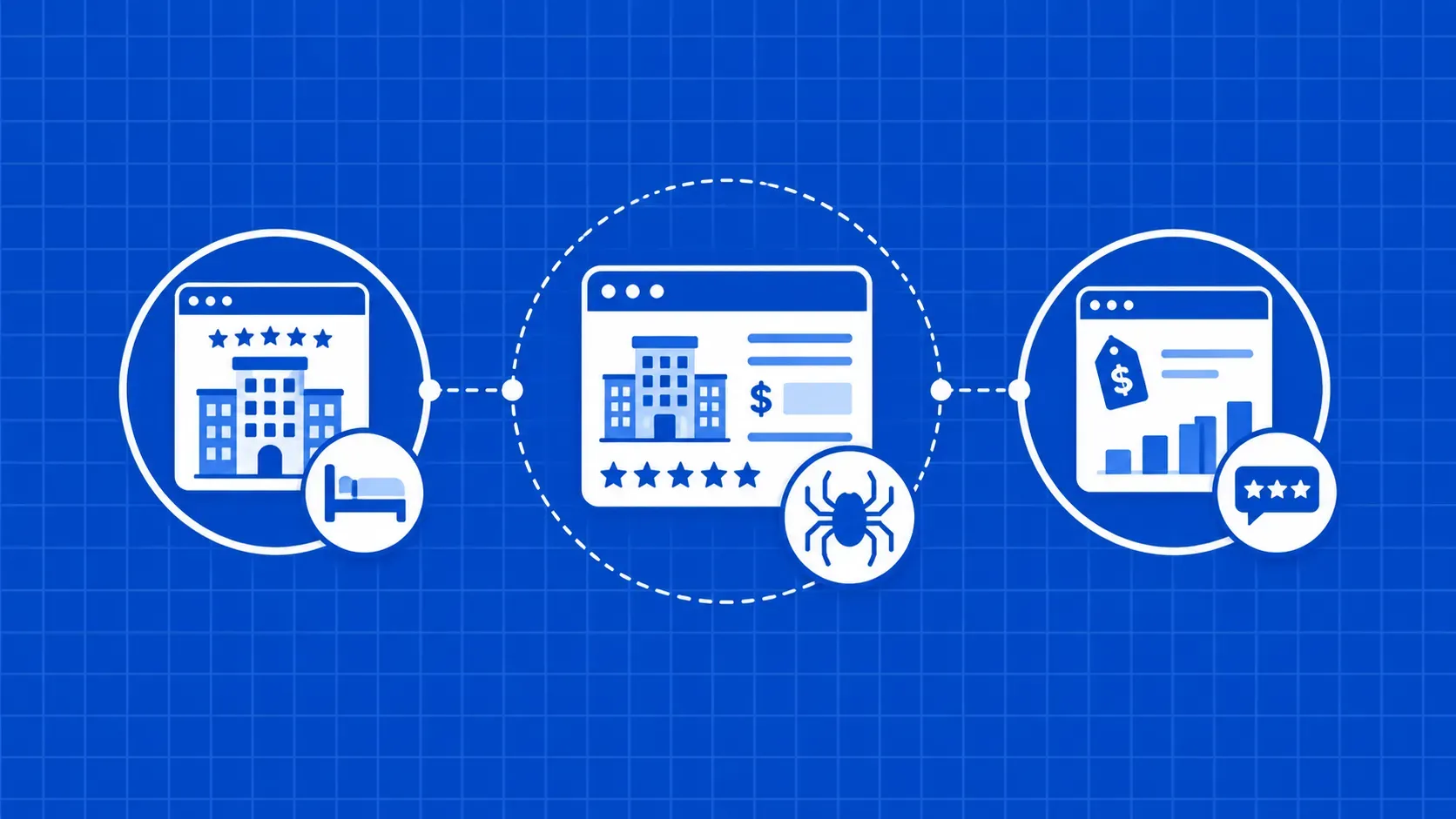

TL;DR: This guide walks through web scraping Booking.com end to end in Python: pulling search listings, hotel pages, nightly prices, and guest reviews. You get two complementary methods: a Selenium Wire workflow for JS-rendered pages and a faster path that calls Booking.com's internal /dml/graphql endpoint directly, plus an anti-block playbook, currency handling, and a workaround for the roughly 1,000-result paging cap.Booking.com is the kind of dataset travel and hospitality teams keep coming back to: live nightly rates, competitor positioning, supply by neighborhood, guest sentiment by property. The catch is that none of it is exposed through an open API for the general public, so if you want it programmatically you end up doing some form of web scraping Booking.com yourself. This tutorial shows two practical Python paths and ties them together with the production concerns that usually bite people on the second week.

At the time of writing, Booking.com is one of the largest accommodation platforms on the web, with millions of bookable properties across hotels, resorts, and short stays. (We'll keep specific listing counts approximate; the company's public numbers move around.) The platform is heavily JavaScript-driven and ships real anti-bot defenses, so naive requests.get scripts tend to fail before they get useful.

You'll see how to run a Selenium-based scraper for search results, how to reverse-engineer the same data out of the internal GraphQL endpoint, how to pull hotel detail pages, prices, and reviews, and how to scale past the result cap with sitemaps and query partitioning. Code is Python 3.10+ and assumes you're comfortable with DevTools and CSS selectors.