TL;DR: Scrapy Splash pairs Scrapy's fast crawling engine with the Splash headless browser to render JavaScript-heavy pages. This scrapy splash tutorial walks you through Docker setup, Scrapy project configuration, SplashRequest basics, Lua scripts for scrolling and clicking, proxy integration, and fixing the most common errors you will encounter.

Scrapy is one of the most efficient web crawling frameworks in the Python ecosystem, but it has a well-known blind spot: it cannot execute JavaScript. Any site that loads data through client-side rendering, AJAX calls, or single-page application frameworks is invisible to a vanilla Scrapy spider. This is exactly the problem a scrapy splash tutorial solves.

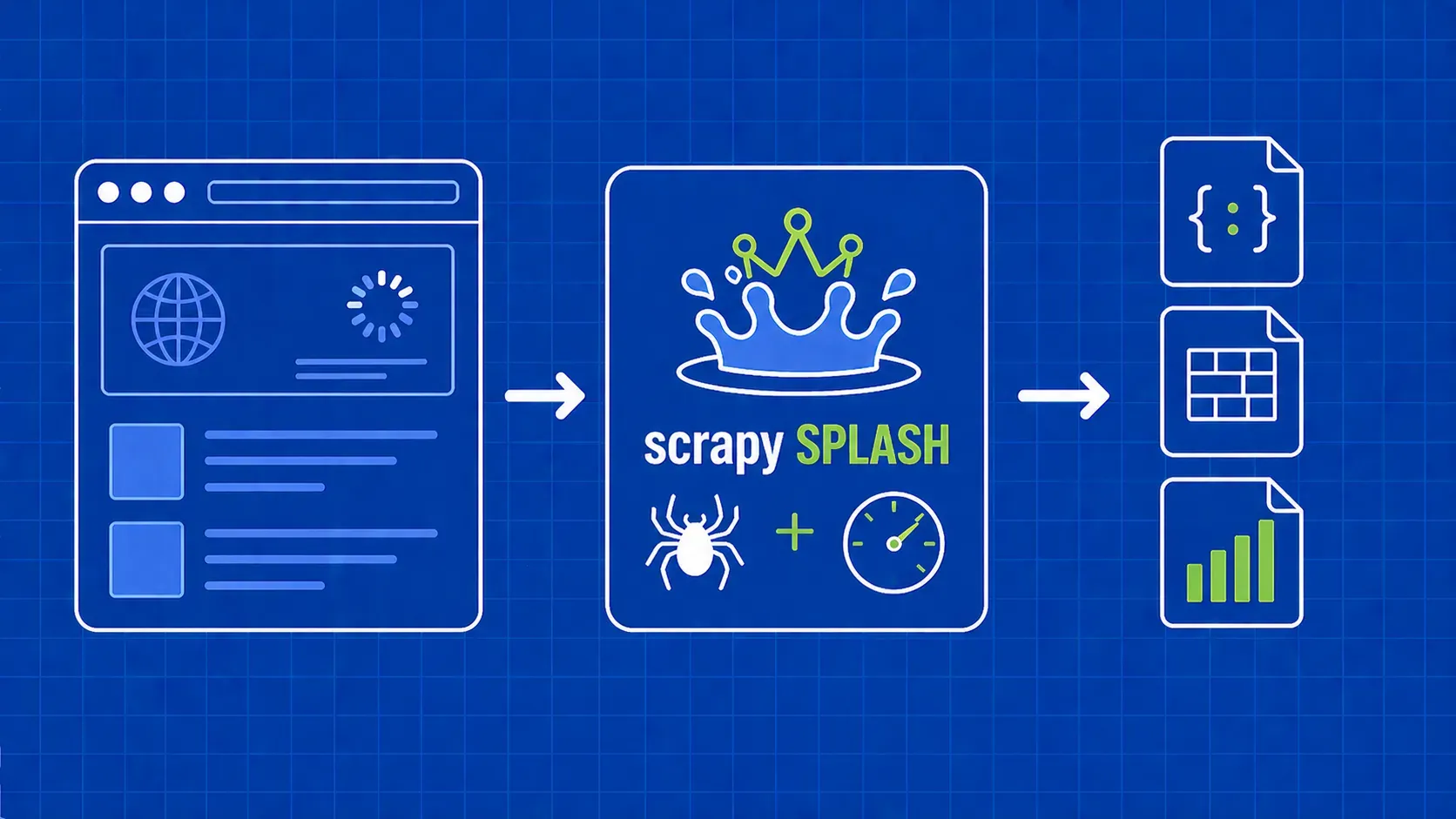

Scrapy Splash is an integration layer between Scrapy and the Splash headless browser. Splash is a lightweight, Qt-based rendering service developed by Zyte (the same team behind Scrapy) that exposes an HTTP API. Instead of running a full desktop browser, Splash loads a page in a stripped-down WebKit engine, executes the JavaScript, and returns fully rendered HTML back to your spider. Your parse methods keep working with standard CSS and XPath selectors as if nothing changed.

In this guide you will set up Docker and Splash from scratch, configure your Scrapy project, write spiders that render dynamic pages, create Lua scripts for advanced interactions, wire up proxies, and troubleshoot the errors that trip up most newcomers.