TL;DR: A Python headless browser lets you render JavaScript, click through SPAs, and scrape sites that plain HTTP clients can't reach. Selenium is the safest default, Playwright is the modern pick for new code, Pyppeteer and Splash still have niche uses, and a hosted browser API is what you reach for when anti-bot defenses or scale start to bite.

If you've ever tried to scrape a JavaScript-heavy site with requests and ended up with an empty <div id="app">, you already know why a Python headless browser exists. A headless browser is a real browser engine, usually Chromium or Firefox, that loads pages and runs JavaScript without rendering a visible window. You drive it from Python the same way you'd click around in Chrome, only faster and on a server.

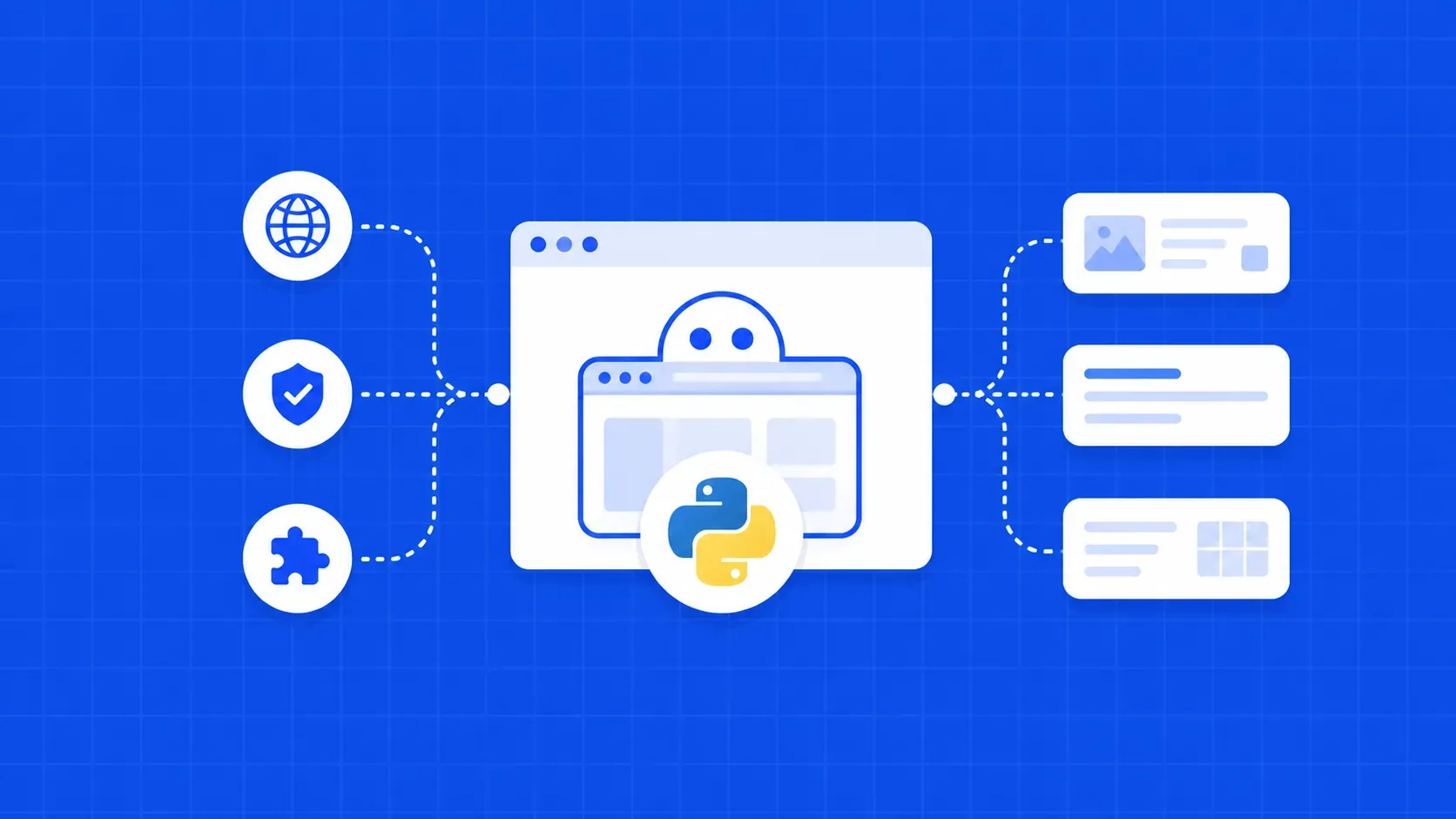

The Python headless browser landscape has shifted a lot since the Selenium-only days. Playwright now ships an officially supported Python binding, Pyppeteer's maintenance has slowed, Splash is still around for Scrapy users, and a wave of hosted browser APIs has emerged for teams that don't want to babysit Chromium pods at 3 a.m. Picking the right tool is less about "which is best" and more about which is best for your target site, scale, and anti-bot exposure.

This guide walks through every option that matters in 2026, with runnable Python code, honest tradeoffs, hedged benchmark numbers, and a decision tree at the end. By the time you finish, you should know which Python headless browser to install, when to run it yourself, and when to hand the whole thing off to a managed API.