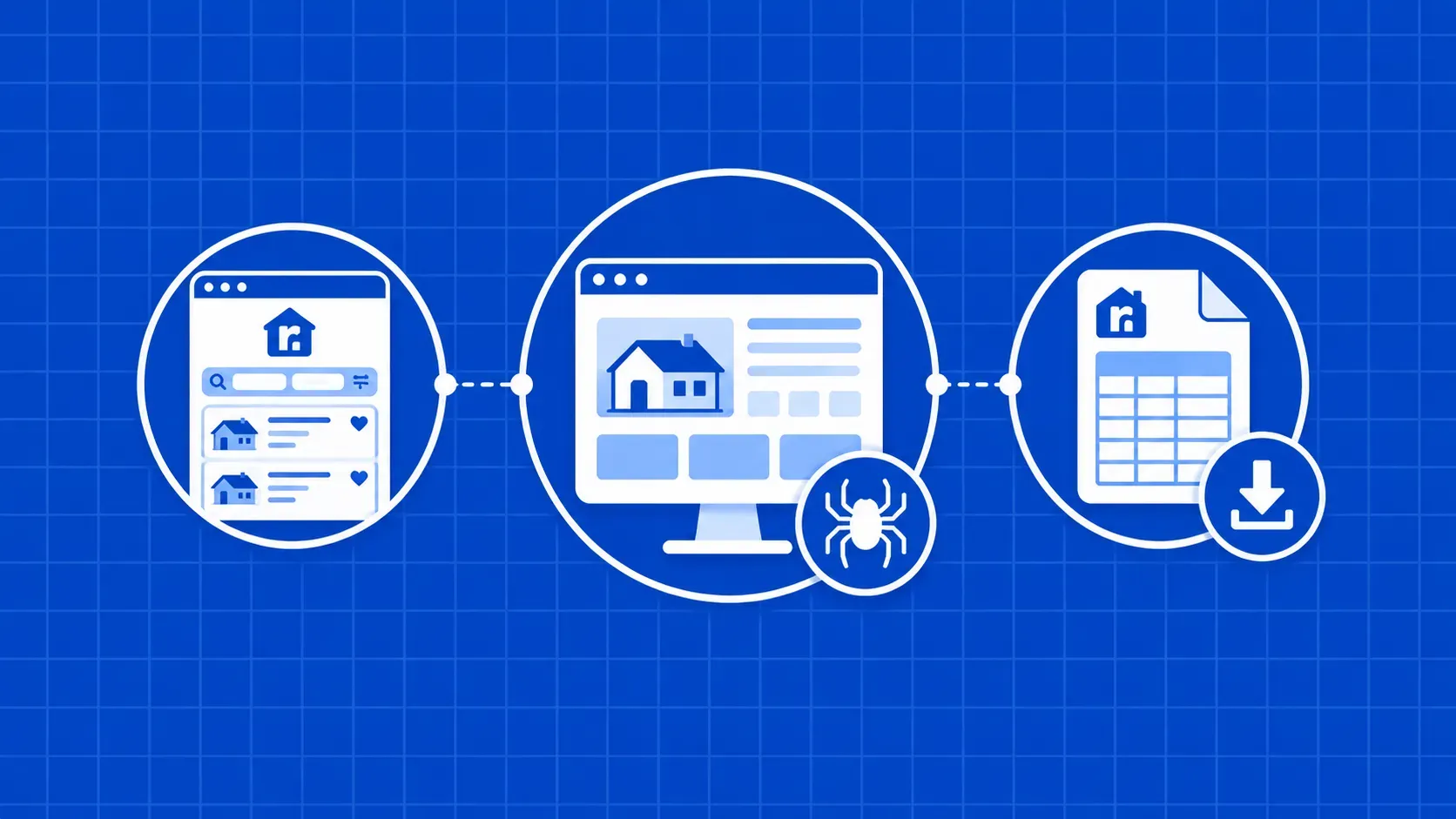

TL;DR: If you're working out how to scrape Realtor.com cleanly, three things matter most: stable selectors that survive their hashed class names, a request layer that survives Realtor's anti-bot stack, and code that walks both list pages and detail pages. This guide is the full Python build, with anti-block tactics and LLM-ready exports.

If you need property data at scale, learning how to scrape Realtor.com is one of the highest-leverage skills you can pick up. Realtor.com is a major U.S. real estate marketplace, listing homes for sale, rentals, and live housing-market information, and most of that data is rendered into HTML you can parse with Python.

The catch is that Realtor.com is a high-value target with a hardened anti-bot stack. Naive requests.get() calls return CAPTCHA HTML, hashed class names rotate without notice, and the richest fields hide inside embedded JSON blobs. The wrong toolchain can burn a week before producing a single clean row.

This guide walks the full Python build end to end: which fields you can actually pull, the selectors that survive Realtor.com's React rendering, how to route requests through a scraping API that handles proxies and CAPTCHAs for you, and how to extract detail-page data like agent contacts, amenities, and lat/long. We'll cover throttling, error handling, legal limits, and how to feed listings into an LLM for downstream analysis.

You'll leave with a working scraper, not a copy-pasted snippet that breaks the next time the front-end ships.