TL;DR: This guide shows how to rotate proxies in Python end-to-end: pick the right proxy type, build and validate a pool, then rotate sequentially withitertools.cycle, randomly withrandom.choice, or asynchronously withaiohttp. We also pair IP rotation with User-Agent rotation and add status-aware retries so a single bad proxy does not kill your scrape.

If your Python scraper started returning 403s, 429s, or empty pages after running fine yesterday, you are almost certainly being throttled or banned by IP. The fix most teams reach for is proxy rotation, and learning how to rotate proxies in Python is a rite of passage for anyone scaling past a hobby script.

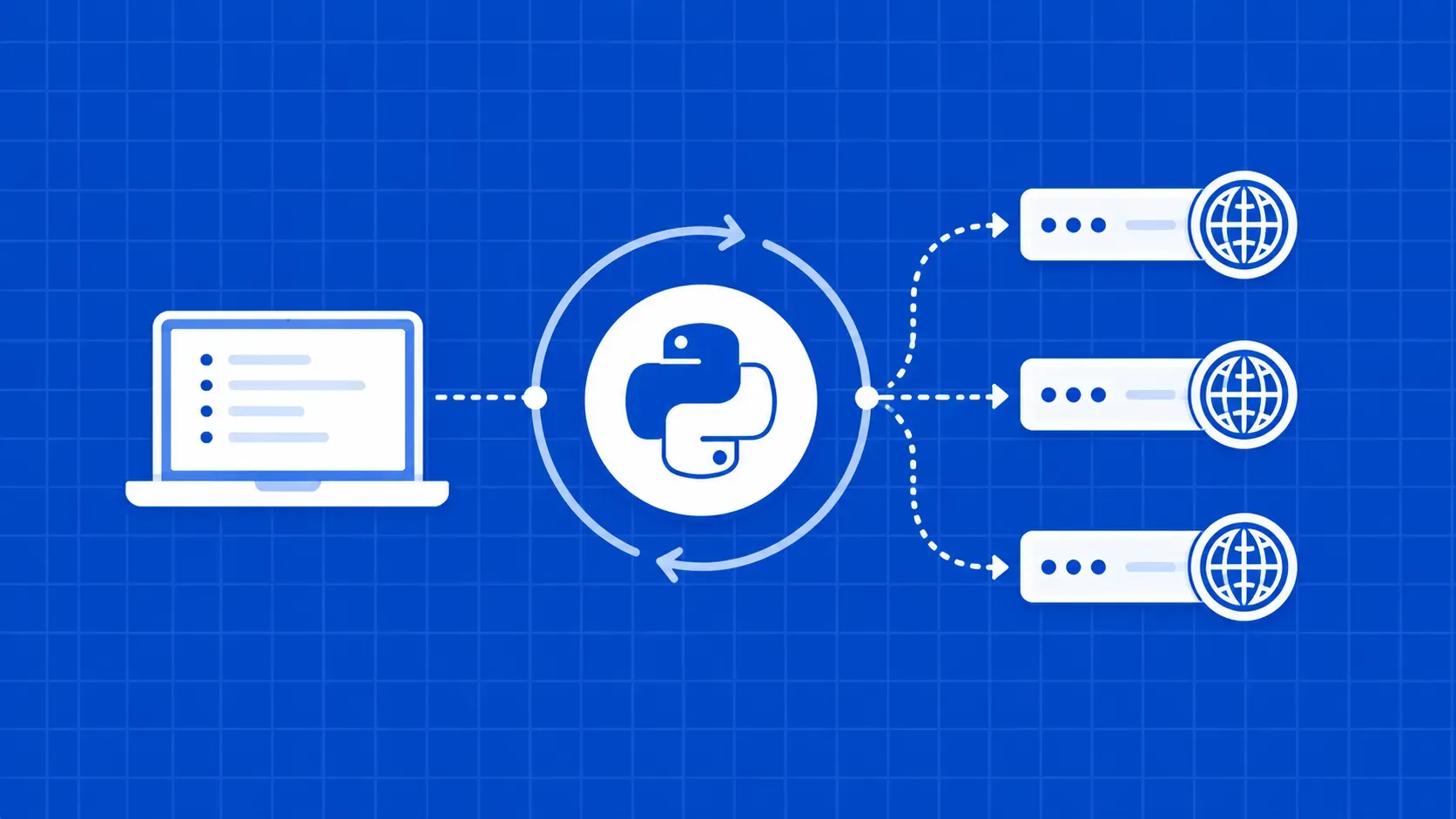

Proxy rotation in Python means changing the outbound IP per request, on a schedule or at random, so each request looks like it came from a different machine. Done well, it spreads load across many IPs, defeats per-IP rate limits, and makes scraper traffic harder for anti-bot systems to fingerprint. Done badly, with a stale free list and a blanket try/except, it just turns one banned IP into a pool of banned IPs.

This article is the practical version of how to rotate proxies in Python. We will pick proxy types, build a validated pool, send a request through Requests, then walk through three rotation strategies (sequential, random, async). We will pair IP rotation with header rotation, add real error handling, and finish with an honest buy-vs-build comparison.