TL;DR: This guide shows how to scrape HTML tables in Golang end to end: choose between Colly, goquery, andgolang.org/x/net/html, target the right<tbody>, model rows as a typed struct, and export clean JSON and CSV. You also get pagination, anti-block, and JavaScript-rendered table patterns.

If you have ever tried to feed an HTML <table> into a Postgres warehouse or a CSV for analysts, the data is right there in the DOM, but lifting it out reliably is its own small project. This guide walks through how to scrape HTML tables in Golang in a way that survives real pages, not just clean tutorials.

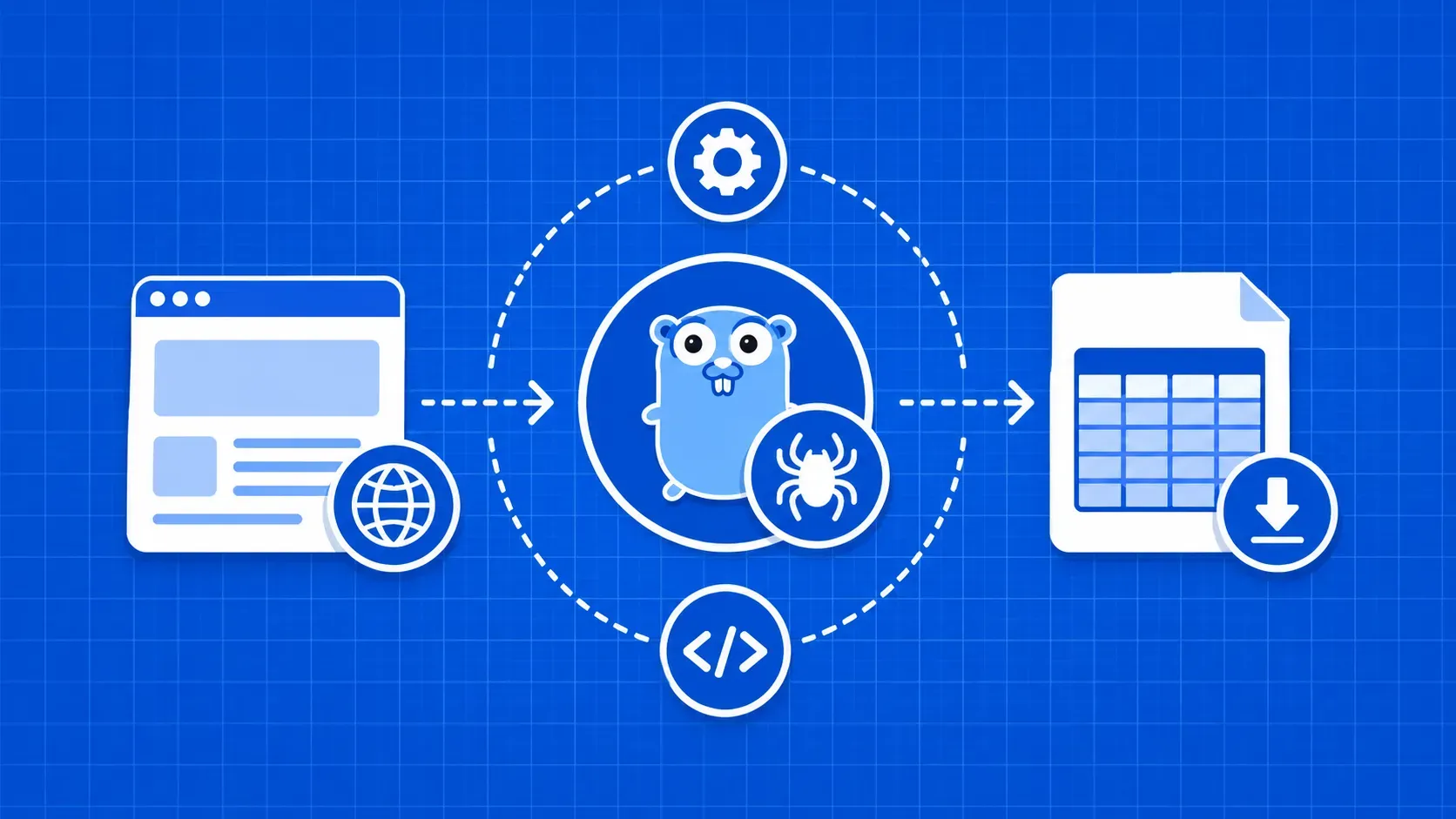

An HTML table is a structured grid of rows (<tr>) and cells (<td> or <th>). Scraping it means parsing the markup, walking those elements, and turning each row into a typed record your code can use downstream. In Go you have three serious options: Colly, goquery, and the lower-level golang.org/x/net/html. We will cover when each one fits, then build a working scraper around Colly v2.

You will learn how to inspect a page in DevTools, write a precise CSS selector, model rows as a struct, export both JSON and CSV, and handle pagination, JavaScript rendering, and anti-bot blocks. By the end, you will have a copy-paste-ready pattern for how to scrape HTML tables in Golang.